With the release of 2026.2 the way BiRefNet works has changed slightly. The extra matchbox nodes are no longer needed for pre/post processing, and those parameters have migrated into the json file packaged with the .inf file.

I have uploaded several new variations that can be used for 2026.2 and are backwards compatible I believe to 2026 (in older versions you still need the matchboxes, it will just ignore the new json segments).

One new variation is an fp16 model which is smaller an runs faster. All ONNX files come from the official repository, I only re-wrapped them for Flame.

The 2026.2 files are the new models. We’ll delete the old one to avoid confusion.

One note - BiRefNet is not temporarily stable, so it’s a mixed bag for video. You need to inspect your matte carefully. But there can still be valid use cases.

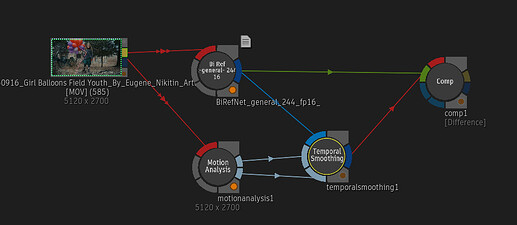

I also did some testing with the new ‘TemporalSmoothing’ node added in 2026.2. Unfortunately it doesn’t seem to work very well in this case, so it’s not a definitive workaround to BiRefNet’s limitations.

This is the test case I used:

It tried all the smoothing modes, and ‘weighted average’ gets close, but can still leave matte artifacts.

Hope you all find these new models uploads useful (access them via the Logik Portal).